Slaughterbots are here.

The era in which algorithms decide who lives and who dies is upon us. We must act now to prohibit and regulate these weapons.

Our resources on Autonomous Weapons

We have produced a few resources to support the push for regulation on Autonomous Weapons. Here are a few that we would like to highlight:

The

Autonomous Weapons Newsletter

Autonomous Weapons Watch

A Diplomat’s Guide to Autonomous Weapons

A quick-start guide on autonomous weapons and the policy landscape surrounding the topic. Intended for diplomats who are new to the topic of autonomous weapons, or are needing a primer on the topic, our comprehensive guide covers: What AWS are; Why they pose significant risks; Common myths and misconceptions; A timeline of developments on the way to a treaty; States’ positions; And more.

What are autonomous weapons systems?

Slaughterbots, also called “autonomous weapons systems” or “killer robots”, are weapons systems that use artificial intelligence (AI) to identify, select, and kill human targets without human intervention.

Whereas in the case of unmanned military drones the decision to take a life is made remotely by a human operator, with autonomous weapons the decision is made by algorithms alone.

An autonomous weapon system is pre-programmed to kill a specific “target profile.” The weapon is then deployed into an environment where its AI searches for that “target profile” using sensor data, such as facial recognition.

When the weapon encounters someone or something the algorithm perceives to match its target profile, it fires and kills.

What’s the problem?

Weapons that use algorithms to kill, and are not controlled by human judgement, are immoral and a grave threat to national and global security.

1. Immoral

Algorithms are incapable of comprehending the value of human life, and so should never be empowered to decide who lives and who dies. The United Nations Secretary General António Guterres agrees that “machines with the power and discretion to take lives without human involvement are politically unacceptable, morally repugnant and should be prohibited by international law.”

2. Threat to Security

Algorithmic decision-making allows weapons to follow the trajectory of software: faster, cheaper, and at greater scale. This will be highly destabilising on both national and international levels because it introduces the threats of proliferation, rapid escalation, unpredictability, and even the potential for weapons of mass destruction.

3. Lack of accountability

Delegating the decision to use lethal force to algorithms raises significant questions about who is ultimately responsible and accountable for the use of force by autonomous weapons, particularly given their tendency towards unpredictability.

Videos on Slaughterbots

The urgent fight to stop Slaughterbots

The majority of UN states are in support of a legally binding treaty on autonomous weapons to prohibit the most unpredictable and dangerous, and regulate those that can be used with meaningful human control. Will your country support legally-binding rules towards autonomous weapons systems?

26 March 2024

Slaughterbots

Featured in: BBC Newsnight | BBC Panorama | News Show | Das Este | The Atlantic | The Times | IEEE Spectrum | Futurism | The Guardian | The Economist | + more…

13 November 2017

Slaughterbots – if human: kill()

In 2017, Slaughterbots warned the world of what was coming. Its 2021 sequel showed that autonomous weapons systems have well and truly arrived. Will humanity prevail?

30 November 2021

Reality – not science fiction.

Terms like “slaughterbots” and “killer robots” remind people of science fiction movies like the The Terminator, which features a self-aware, human-like, robot assassin. This fuels the assumption that autonomous weapons systems are of the far–future.

But that is incorrect.

In reality, weapons which can autonomously select, target, and kill humans are already here.

A 2021 report by the U.N. Panel of Experts on Libya documented the use of an autonomous weapon system hunting down retreating forces. Since then, there have been numerous reports of swarms and other autonomous weapons systems being used on battlefields around the world.

The accelerating rate of these use cases is a clear warning that time to act is quickly running out.

Milestones

Mar 2021

First documented use of an autonomous weapons system

Jun 2021

First documented use of a drone swarm in combat

Feb 2023

Latin American and the Caribbean Conference on the Social and Humanitarian Impact of Autonomous Weapons. First-ever regional conference on autonomous weapons outside of the CCW

Apr 2023

Luxembourg Autonomous Weapons Systems Conference

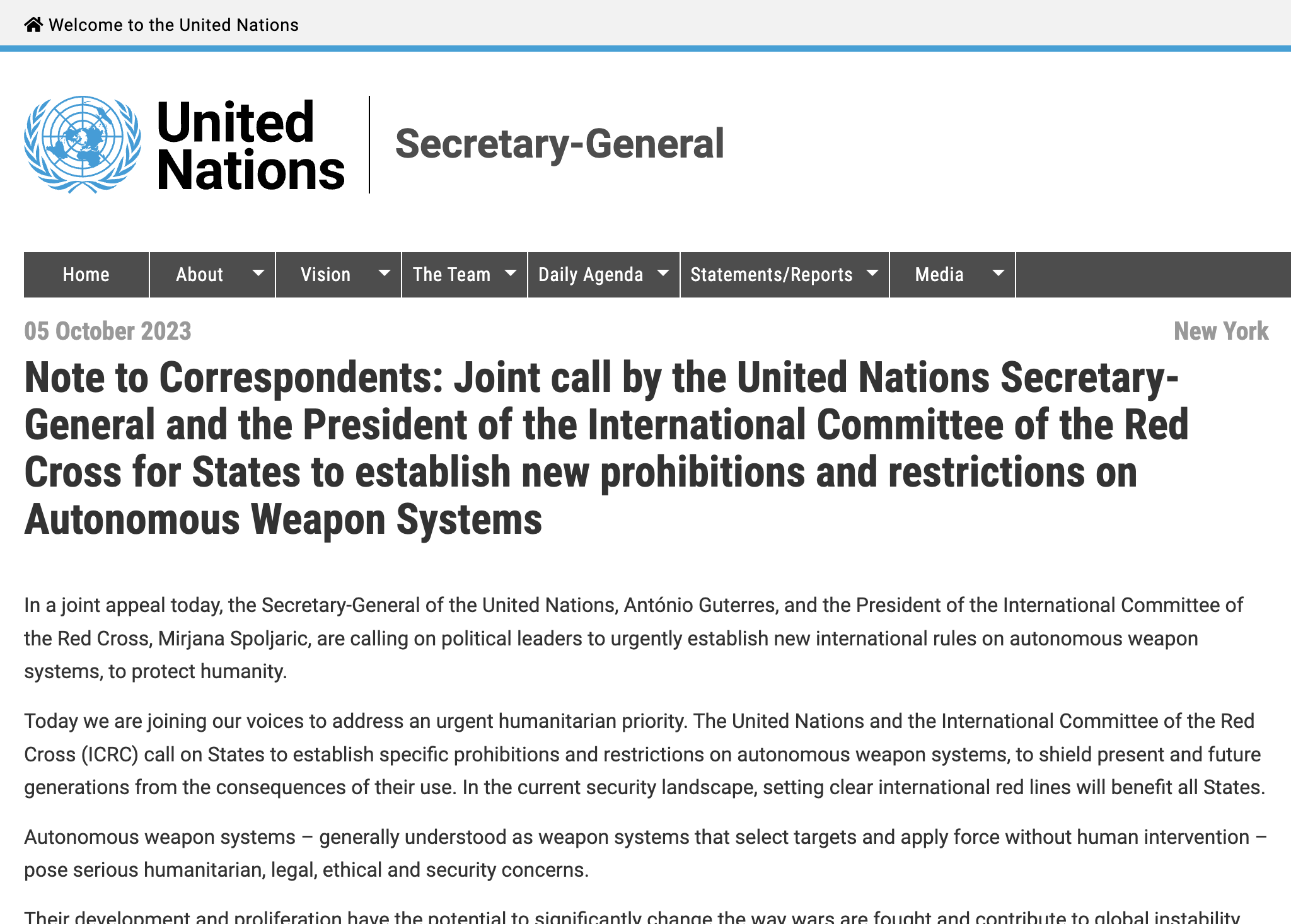

Oct 2023

First-ever resolution on autonomous weapons tabled at the UN General Assembly

UN Secretary General and ICRC President call for States to negotiate a Treaty on autonomous weapons systems by 2026

Update

United Nations Secretary-General and the ICRC President call upon States to negotiate a treaty on Autonomous Weapons Systems by 2026

What's being done about it?

The International Committee of the Red Cross (ICRC) Position

We do not need to be resigned to an inevitable future with Slaughterbots. The global community has successfully prohibited classes of weaponry in the past, from biological weapons to landmines.

As with those efforts, the International Committee on the Red Cross (ICRC) recommends that states adopt new legally binding rules to regulate autonomous weapons.

Importantly, the ICRC does not recommend a prohibition of all military applications of AI - only of specific types of autonomous weapons. There are many applications of military AI already in use that do not raise such concerns, such as automated missile defense systems.

Find out more about the available solutions:

The ICRC Position:

The ICRC is recommending three core pillars:

1: No human targets

Prohibition on autonomous weapons that are designed or used to target humans.

2: Restrict unpredictability

Prohibition on autonomous weapons with a high degree of unpredictable behaviour.

3: Human control

Regulations on other types of autonomous weapons combined with a requirement for human control.

Their full position can be found here:

The risks

What risks do autonomous weapons systems pose?

Unpredictability

Autonomous weapons systems are dangerously unpredictable in their behaviour. Complex interactions between machine learning-based algorithms and a dynamic operational context make it extremely difficult to predict the behaviour of these weapons in real world settings. This is by design; these weapons systems are programmed to act unpredictably in order to remain one step ahead of enemy systems.

Escalation

Given the speed and scale at which they are capable of operating, autonomous weapons systems introduce the risk of accidental and rapid conflict escalation. Research by RAND found that “the speed of autonomous systems did lead to inadvertent escalation in the wargame” and concluded that “widespread AI and autonomous systems could lead to inadvertent escalation and crisis instability.”

Proliferation

Slaughterbots do not require costly or hard-to-obtain raw materials, making them extremely cheap to mass-produce. They’re also safe to transport and hard to detect. Once significant military powers begin manufacturing, these weapons systems are bound to proliferate. They will soon appear on the black market, and then in the hands of terrorists wanting to destabilise nations, dictators oppressing their populace, and/or warlords wishing to perpetrate ethnic cleansing.

Selective Targeting of Groups

Selecting individuals to kill based on sensor data alone, especially through facial recognition or other biometric information, introduces the risk of selective targeting of groups based on perceived age, gender, race, ethnicity or religious dress. Combine this with the risk of proliferation, and autonomous weapons could greatly increase the risk of targeted violence against specific classes of individuals, including even ethnic cleansing and genocide.

Learn more about the risks of autonomous weapons:

Global debate

What is the current debate around autonomous weapons?

Over 100 countries in support of a legally-binding instrument

Over 100 countries support a legally-binding instrument on autonomous weapons systems, including all of Latin America, 31 African countries, 16 Caribbean, 15 Asian, 13 European, 8 Middle Eastern and 2 in Oceania.

As more and more states join the call for a treaty on autonomous weapons, the countries blocking these efforts find themselves in a shrinking minority.

After nearly 10 years of discussions at the United Nations Convention on Certain Conventional Weapons (CCW) in Geneva, a large number of states have come to support the 'two-tier' approach of prohibition and regulation of autonomous weapons systems. This approach would prohibit systems that are designed or used in a manner whereby their effects cannot be sufficiently predicted, understood and explained, and regulate other systems that ensure sufficient human control. Sufficient human control might look like control over the types of targets, and duration, geographical scope and scale of use.

Since February 2023, countries have begun to host their own regional conferences outside of the CCW to discuss autonomous weapons . This is a crucial step towards a treaty, as countries can participate in discussions unburdened by the CCW's consensus principle that has so long paralysed the forum. As more regions host conferences, more countries can come on board who are not members of the CCW and have therefore been unable to participate in the UN discussions on autonomous weapons systems.

UN CCW - ‘Road to Nowhere’

The United Nations' Convention on Certain Conventional Weapons (CCW) in Geneva has been discussing autonomous weapons since 2013. That year they set up informal ‘Meetings of Experts’ to address what was then an emerging issue. In 2016, this was formalised into a Group of Governmental Experts (GGE) to develop a new ‘normative and operational framework’ for states’ consideration. Three years later, the CCW adopted the GGE’s suggested eleven guiding principles.

In 2021 the world saw the first uses of autonomous weapons in combat. If it had not been already, the need for a legally binding protocol was now self-evident. So, when the landmark CCW Review Conference of December 2021 could not even agree to start negotiating a protocol, the Convention was widely seen as ‘having failed’.

The GGE has since stalled without further progress. In July, the majority delegations got behind a promising draft - which was then stripped of all that promise by a persistent, blocking minority. By now, as Ray Acheson, Director of Disarmament at the Women's International League for Peace and Freedom (WILPF) put it, the CCW has proven itself to be a ‘road to nowhere’.

Almost ten years since these discussions began, autonomous weapons are spreading fast, yet they remain unregulated. The world can no longer afford to wait for this Convention to get something done. States must find another forum through which to reach a legally-binding instrument.

Our Common Agenda - The Future of the United Nations

The U.N. Secretary General presented a 25-year vision for the future of global cooperation, and reinvigorated inclusive, networked, and effective multilateralism, at the 2021 General Assembly. This report identifies "establishing internationally agreed limits on lethal autonomous weapons systems" as key to humanity's successful future.

Help us prevent Slaughterbots before it's too late.

Once autonomous weapons systems are unleashed upon the world, there is no going back. Take the pledge to help us build a strong case against autonomous weapons.

Organisations

What organisations are working on this issue?

Articles

Content we have published on the topic of Autonomous Weapons:

Myths vs. Fact: Autonomous Weapons and the Global Effort to Control Them

Do AI weapons reduce casualties? Are autonomous weapons ethical? We debunk the biggest misconceptions about AI in war and its impact on humanity.

Key Forums Shaping the Global Debate on Autonomous Weapons

A quick summary of all the main political international forums where the topic of Autonomous Weapons is, or has been, actively addressed.

The Political Landscape: How Nations are Responding to Autonomous Weapons in War

How are nations responding to the rise of AI in war? A reference guide for all of the most prominent positions that countries have taken in the global debate on Autonomous Weapons.

News feed

See the latest news updates on the issue:

UN Secretary-General, President of International Committee of Red Cross Jointly Call for States to Establish New Prohibitions, Restrictions on Autonomous Weapon Systems

United Nations

Why the Pentagon’s ‘killer robots’ are spurring major concerns

The Hill | By Brad Dress

Philippines lobbies UN for rules on development, use of killer robots

BusinessMirror | By Malou Talosig-Bartolome

See the full history of autonomous weapons coverage: